"Do expected utility maximizers have commitment issues?" - Paul de Font-Reaulx (University of Michigan)

Philosophy and Phenomenological Research (Published online, 2025)

Do Expected Utility Maximizers Have Commitment Issues?

Can we rationally trust our future selves?

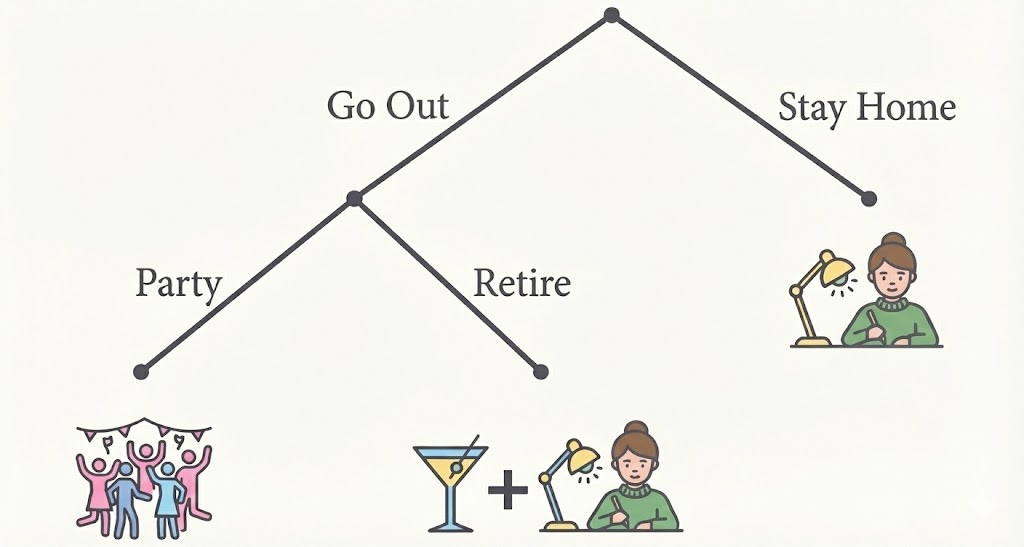

Suppose that you are thinking about whether to go out for a drink tonight with a friend. You know that you have to finish work later in the evening, but think it would be nice to have a little break before that. However, you also realize that if you were to go out and have a drink, there is a high probability that you will find yourself wanting to stay out for longer and forsake your responsibilities.

What should you do?

We might take inspiration from rational choice theory. According to the standard theory of expected utility theory (EU-theory, for short), rational agents act to effectively satisfy their preferences, given their guesses about how to do so. However, in “temptation” cases like that above, this gets complicated, because we expect our preferences to change.

Like Ulysses who ties himself to the mast to resist the temptation of the sirens, it is widely believed that EU-theory tells us to stay home. Otherwise, it seems we would end up with a workless night, which we desperately want to avoid. However, there is something funky about that conclusion.

We always prefer to have at least one drink to staying home. Yet EU-theory tells us to stay home. In other words, it seems to recommend choosing an option that is always worse by our own lights. What’s worse, this seems true whenever we expect our preferences to fluctuate over time, which is arguably a common human experience. This raises questions about whether EU-theory can really be a good theory of rational choice for fickle beings like ourselves. Let’s call this the Commitment Problem for EU-theory.

Critics have taken the Commitment Problem to undermine the appeal of EU-theory, proposing that we replace it with an alternative decision theory that recommends agents to follow through on commitments, come hell or high water. Such theories of “resolute choice” would tell us to go out and then leave early, even though we would by then want to stay out. But arguably, it seems foolhardy for someone like Ulysses to leave himself untied, insofar as his later self might simply stop caring about being rational at all.

The only thing everyone seems to agree on in these debates is that EU-theory tells us to tie ourselves to the mast, whether literally or figuratively. In this paper, I argue that this is typically false.

The expected utility of trusting yourself

One major difference between Ulysses and ourselves is that we get to act again tomorrow, and after that. Ulysses, on the other hand, is likely steering his ship towards his demise if he follows the sirens. This means that if our current choice impacts outcomes beyond tonight, that can change what is rational by the lights of EU-theory.

We might think that whether or not I go out for a drink tonight, and whether or not I stay out, should not make any causal difference to the future beyond tonight, since I get to choose whether I want to do the same thing tomorrow. That is almost true, with a very important exception: If I face the same choice tomorrow, then I will have learned something by observing what I did today, namely whether I can trust myself to follow through on a commitment.

If I decide to go out for a drink tonight with a plan to head home early, but then find myself choosing to stay out late, I will have learned that I can’t trust myself to follow through on such plans. Consequently, it will be rational for me tomorrow to stay home. By contrast, if I go out and do go home early, I will have learned that I can trust myself, thus making it more rational for me tomorrow to go out for one drink again.

In other words, my choice tonight can make a causal difference to what is rational for me to do tomorrow. Insofar as I care about getting at least one drink on future nights, my later self might maximize their expected utility by leaving early, even when they would prefer to stay out, all else equal.

This dynamic can be understood as an incentive to build a good reputation with oneself in the same way that we have to build good reputations with other people.

To illustrate, suppose that I am an unscrupulous accountant who would prefer to do a sloppy job for a client if I can get away with it. If I will never interact with a client again, nor expect that they will tell anyone about my performance, I would maximize my expected utility by doing a sloppy job. However, if they might hire me in the future, I have an incentive to do a proper job to increase the probability that I get hired again in the future.

The same argument goes for time-slices of ourselves: I can decide whether to “hire” my later self to make a decision on my behalf, which might be better or worse than me just staying home. And I should only do so if I trust myself to make the decision that is better by my current responsible lights. Meanwhile, my later self faces an incentive to prove themselves trustworthy with this responsibility so that they might be given the opportunity to have at least a little fun in the future too.

Solving the Commitment Problem

This suggests that it can be rational for us to go out and then leave early, even if we have faced a preference reversal, insofar as we care about the future. However, that’s not saying very much about when it is in fact rational. In the main part of the paper, I formalize this argument using a so-called Bayesian reputation game.

The details of this are rather complicated and will be skipped over here. The main result, however, is that given relatively weak assumptions, the following claim is true: If we expect to face a handful of similar situations in the future, then it is rational to make a commitment and follow through on it.

This result holds even when we are very confident that we will face a preference change, we do have a change of preference, and our later self discounts the value of the future substantially. The reason is that the value of a good reputation is high enough to outweigh the expected utility of satisfying the temptation.

What does this mean? The primary upshot is that, contrary to what most people have assumed, EU-theory typically tells us to follow through on commitments. In other words, EU theory does not face the commitment problem that its critics claimed that it did. This undermines the case for replacing EU-theory with alternatives like resolute choice, insofar as that comes with independent costs. We can both have our cake and eat it.

Importantly, this does not mean that EU-theory always tells us to trust our later selves. For example, in cases like Ulysses, EU-theory would plausibly recommend him to still tie himself to the mast. The reason is that there is a substantial probability that his later self will simply be irrational and not respond to the incentives that he faces, and would not expect to face a future in which having a good reputation with oneself would be a valuable thing.

When the stakes are high enough, we should plausibly play it safe. The upshot of our discussion is that we don’t always need to do so when we can do better by sometimes trusting ourselves instead.

There are more questions that one can raise about whether EU-theory gets all the cases right. There is also a question about how this Bayesian argument relates to other game-theoretic ideas, such as iterative prisoner’s dilemmas. Finally, some readers might be interested in the formal details. For all of those questions, I recommend the reader to check out the full paper.

Or, if you prefer, to go for a drink instead.

Paul’s Substack: